Docker Machine Remote Deployment AWS EC2

March 4th 2020

Docker is a great tool in development and production to containerize your apps to ensure your deployments are configured the same whether you are running them on a local machine or deploying them to a cloud VPS. More recently, docker swarm has been the popular deployment methodology for deploying remote containers, but what if you just wanted to manage a VPS on AWS, Digital Ocean or one of the many other VPS hosts that support docker? Luckily, docker machine has many drivers for the popular VPS hosts including: AWS, Microsoft Azure, Digital Ocean, Google Compute Engine, Linode, Rackspace, Oracle Virtual Box and many other. These drivers allow you to create a docker managed host on whether that is remote or local to your machine. Docker Machine does this by allowing you to install the Docker Engine (which you are used to for the docker and docker-compose commands on virtual hosts and allow you to manage those virtual hosts using the docker-machine.

Using

docs.docker.comdocker-machinecommands, you can start, inspect, stop, and restart a managed host, upgrade the Docker client and daemon, and configure a Docker client to talk to your host.

One popular remote virtual host option is Amazon Web Services which offer EC2 instances that can be configured to run the Docker Engine and use the Docker Machine utility to manage the remote host. Today we’ll be walking through setting up the aws cli in order to use the provided amazonec2 driver for Docker Machine to create an EC2 instance that is a remote docker host.

Installing and Configuring AWS CLI

AWS CLI v2 is the latest version of the aws command line utility and they offer a number of installers for macOs, Windows and Linux that makes downloading and installing easier:

- Installing the AWS CLI version 2 on macOS

- Installing AWS CLI version 2 on Windows

- Installing the AWS CLI version 2 on Linux

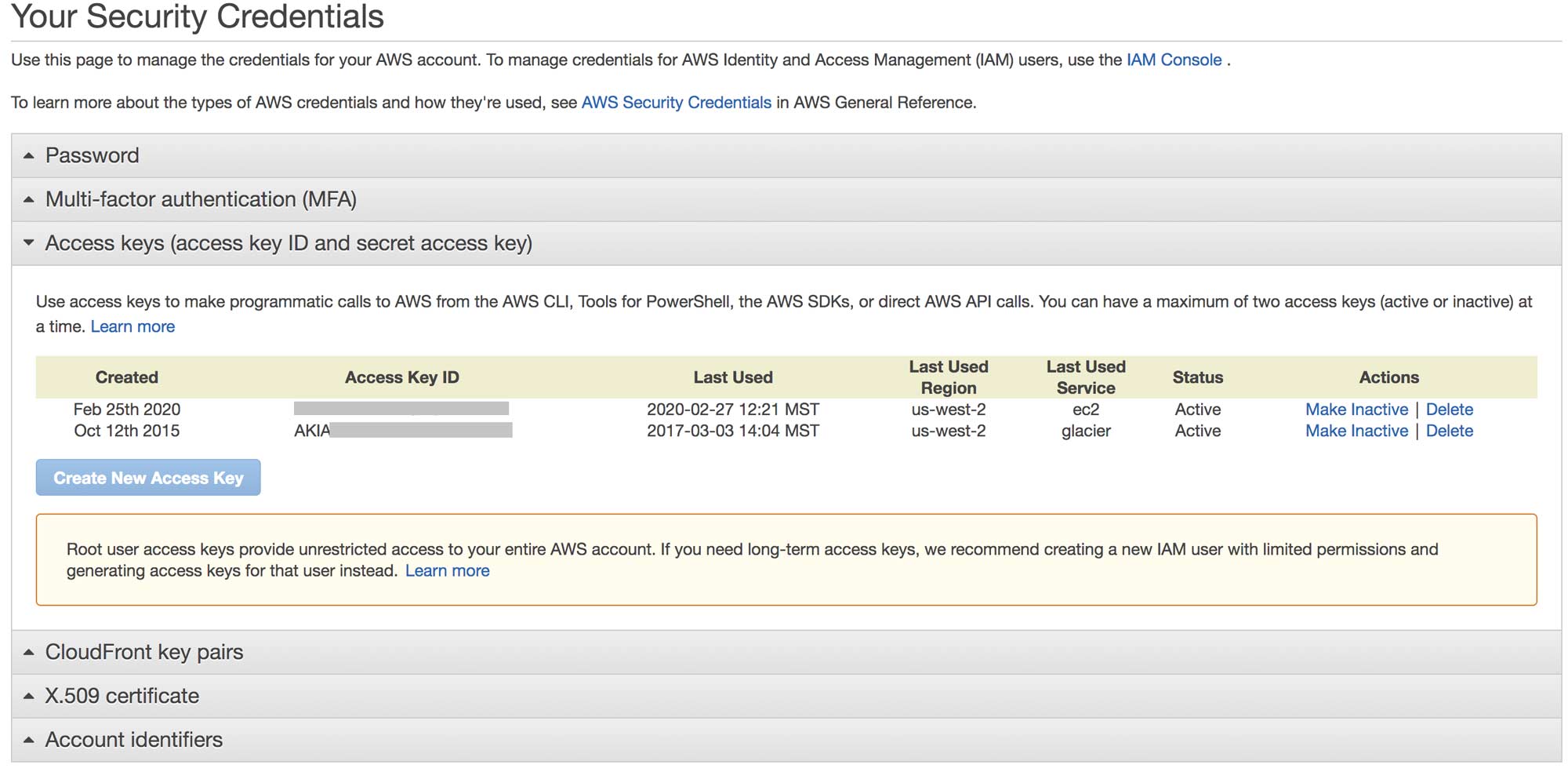

You can follow the installation instructions above for your operating system and once the aws cli is installed we can move on to configuration. Configuration is important because the docker-machine (and aws driver) will need the aws cli configured property to execute the remote commands. Before we can configure we will need to log on to our aws console and create a root access key ID and secret access key. You can login to your aws at console.aws.amazon.com and then navigate to https://console.aws.amazon.com/iam/home?#/security_credentials and you can toggle “Access Keys” dropdown to see a list of your root access key ID and secret access keys.

If you have not yet created an access key and secret key pair than you can click on “Create New Access Key”. You will be presented with a Access Key & Secret key pair and you will need to save the credentials in a safe place as you won’t be able to access your secret key again once you close the dialog window. Now that we have our access key and secret we can continue with our aws cli configuration. Since the aws cli is installed we can use the configure command to quickly configure the cli. In your terminal you can type: aws configure and you will be prompt with the configuration options for your cli:

AWS Access Key ID [None]: AKIAIOSFODNN7EXAMPLE

AWS Secret Access Key [None]: wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY

Default region name [None]: us-west-2

Default output format [None]: jsonMake sure you select your Default region to be the EC2 region that you want to use. If you have any instances running you can use the command line to list instances with the type t2.micro by using this command:

aws ec2 describe-instances --filters "Name=instance-type,Values=t2.micro" --query "Reservations[].Instances[].InstanceId"This will list all InstanceId that are running which are t2.micro type. You probably don’tt have any instances running so let’s change that! With our AWS CLI configured we are able to return our focus to Docker Machine. First we’ll need to install Docker Machine for our operating system. You can head over to https://docs.docker.com/machine/install-machine/ and follow the install instructions for your Docker Machine. Once installed double check the installation by running: docker-machine version and you should see the output for the version of machine you installed.

Since we have the AWS CLI installed and configured we’re able to use the amazonec2 driver. We can provision a new EC2 instance with the following command:

docker-machine create --driver amazonec2 \

--amazonec2-instance-type “t2.micro” \

--amazonec2-open-port 80 \

docker-awsThis will create an ec2 instance with the linux distro Ubuntu and the Docker Engine installed. It will also add the ec2 instance to the security groups docker-machine which opens up port 22 and port 2376 which is what Docker Machine will use to manage the Docker daemon process. We are also using --amazonec2-open-port 80 to open port 80 and set the security group for HTTP on our ec2 instance. The last parameter in the docker-machine create command is the name of your docker machine and also the name of your ec2 instance. You can call this whatever you like but in our example we called it docker-aws. Now with the docker remote host created we can list our docker machines with the command: docker-machine ls

NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS

docker-aws - amazonec2 Running tcp://32.220.147.185:2376 v19.03.6 You can see we have a docker machine called docker-aws running that points to our aws ec2 instance IP but it is not our “Active” machine. In order to run docker commands such as docker ps or docker-compose up we will need to make our docker-aws active. To do so we need to set some env variables and docker-machine gives us a command to which prints out our variables and auto generates a command for us to run to set the env variables that are needed to make our machine active. First let’s execute: docker-machine env docker-aws and you will see all of our env variables we need to set and the last line displaying the command we need to run:

$ docker-machine env docker-aws

export DOCKER_TLS_VERIFY="1"

export DOCKER_HOST="tcp://172.16.62.130:2376"

export DOCKER_CERT_PATH="/Users/<yourusername>/.docker/machine/machines/docker-aws"

export DOCKER_MACHINE_NAME="default"

# Run this command to configure your shell:

# eval "$(docker-machine env docker-aws)"We can run the command eval "$(docker-machine env docker-aws)" and it will set our env variables and make that our “Active” machine. You can confirm the env variables are set by running env|grep DOCKER and you should see a list of environment variables set. If you ever need to unset the variables (for instance if you want to use your local docker daemon/docker engine again) you can run the command: eval $(docker-machine env -u) which will unset the env variables:

unset DOCKER_TLS_VERIFY

unset DOCKER_HOST

unset DOCKER_CERT_PATH

unset DOCKER_MACHINE_NAMEWith the command eval $(docker-machine env docker-aws) you can run docker-machine ls and you should see that our docker-aws is active!

NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS

docker-aws * amazonec2 Running tcp://32.220.147.185:2376 v19.03.6 We can now execute docker commands just like we would on our local machine and the docker-machine that is active will take over and become our docker host that the commands will be executed on. Let’s start a simple nginx container:

docker run --name simple-nginx --rm -p 80:80 -d nginxThis will start a container from the nginx image and map the container port 80 to our docker host port 80. You can verify it is working by typing docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

eef7c8671d5f nginx "docker-entrypoint.s…" 6 days ago Up 6 days 0.0.0.0:80->80/tcp simple-nginxWith that running you can navigate to the external ec2 instance ip (or domain name) and you will see the default nginx welcome page! With the docker machine active you can use any of the docker commands you are familiar with such as docker run or docker stop and all of the docker-compose commands as well and they will be executed on the remote docker host!

We’ve looked at using the docker machine amazonec2 driver to spin up an ec2 instance and use that instance as our remote docker host for managing docker containers remotely! Until next time, stay curious, stay creative.